Edge AI vs Cloud AI: Which Is Better? (2025)

By 2025, 75% of enterprise data will be processed at the edge—a massive shift from cloud-only AI. The choice between Edge and Cloud AI isn't about which is "better," but which fits your use case for speed, cost, security, and scalability.

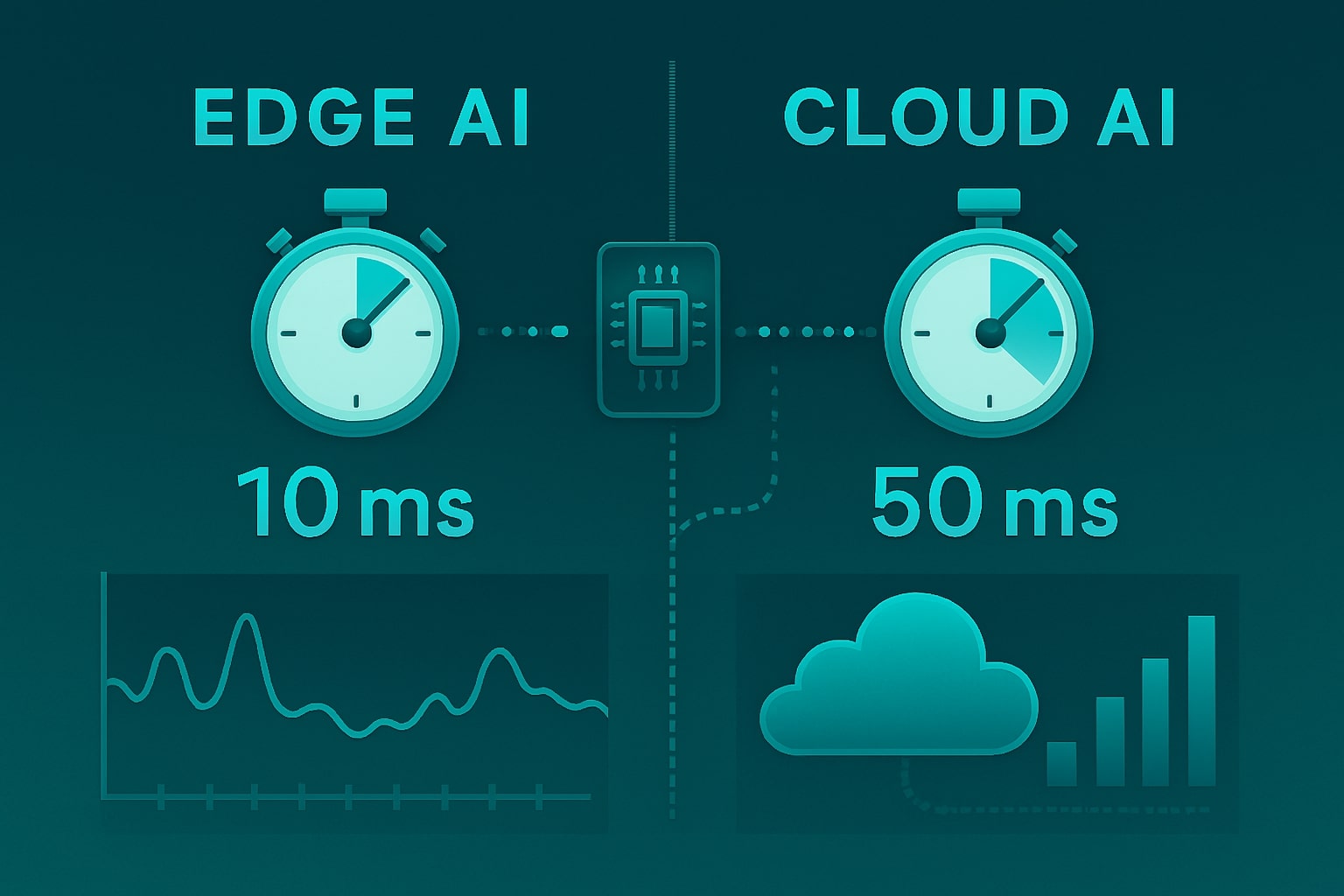

- Edge AI wins on latency: processes data locally on devices (drones, cameras, IoT), enabling real-time decisions in milliseconds. Ideal for autonomous vehicles, industrial robots, and smart factories.

- Cloud AI dominates on computational power: leverages massive data centers for training complex models, large-scale analytics, and healthcare AI where speed is less critical.

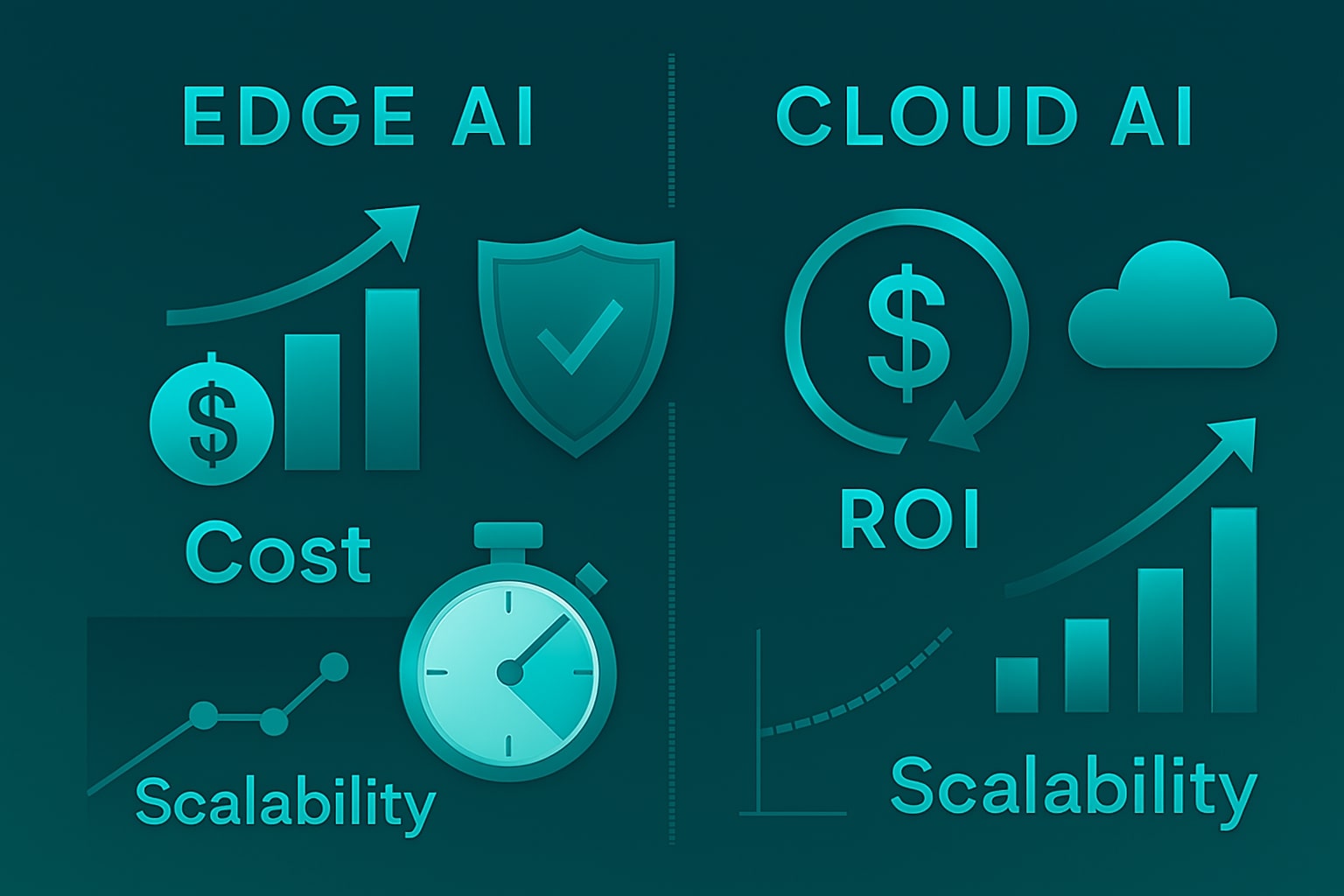

- Security: Edge keeps sensitive data local, reducing exposure; Cloud offers robust infrastructure but risks in transit and third-party management.

- Cost: Edge reduces bandwidth and cloud fees, but requires upfront hardware investment. Cloud scales easily but can lead to 32% wasted spend if not optimized.

- Hybrid AI is the future: platforms like AWS Greengrass and Azure IoT Edge run AI on devices, then sync insights to the cloud—best of both worlds.

- Emerging tech like TinyML and neuromorphic chips will make edge devices smarter and more efficient by 2030.

The smartest organizations aren't choosing one over the other—they're orchestrating both. Read the full analysis here.

Margabagus.com – The artificial intelligence landscape is experiencing its most dramatic transformation yet. By 2025, industry projections reveal that 75% of enterprise-generated data will be processed at the edge, marking a seismic shift from the cloud-centric approach that dominated the previous decade. This isn’t merely a technical evolution—it’s a fundamental reimagining of how businesses deploy AI to gain competitive advantage.

Consider this: the global edge AI market was valued at $20.45 billion in 2023 and is projected to skyrocket to $269.82 billion by 2032. Meanwhile, worldwide generative AI spending is expected to reach $644 billion in 2025, an increase of 76.4% from 2024. But here’s where it gets interesting—the battle isn’t just about market size. It’s about solving the million-dollar question: where should your AI actually live?

The stakes couldn’t be higher. Make the wrong choice, and you’re looking at compromised performance, inflated costs, and security vulnerabilities that could derail your digital transformation. Make the right one, and you unlock unprecedented speed, efficiency, and competitive edge that positions your organization for the AI-driven future.

The Great AI Divide: Understanding the Core Architectures

Let me cut through the technical jargon and explain what we’re really comparing here. Think of cloud AI as your organization’s powerful but distant brain—brilliant at complex thinking but located far from where the action happens. Edge AI, conversely, is like having mini-brains distributed everywhere your business operates, making split-second decisions without waiting for instructions from headquarters.

Cloud AI leverages centralized data centers with massive computational resources. In a Cloud AI architecture, data is sent from the user’s device to a cloud server, where the AI model processes the information and sends back the results. This centralized approach offers virtually unlimited computing power, making it ideal for training complex models and handling large-scale data analytics.

Edge AI flips this model entirely. Edge AI refers to running AI algorithms locally on devices or “at the edge” of the network, such as smartphones, IoT devices, drones and cameras. Instead of sending data to distant servers, processing happens right where data is generated. This approach minimizes latency and enhances real-time processing capabilities, as decisions are made locally without relying on a network connection.

The hardware revolution driving this shift is remarkable. NVIDIA Jetson Nano offers 472 GFLOPS of computing power combined with a quad-core 64-bit ARM CPU and 128-core integrated NVIDIA GPU, while Google Edge TPU is a dedicated hardware accelerator designed to perform high-speed machine learning inference on edge devices, optimized to run TensorFlow Lite models.

Check out this fascinating article: Top 10 Trends Driving AI Agent Adoption in Modern Companies

Performance Showdown: Speed vs. Power

Here’s where the rubber meets the road. When I analyze performance metrics, the differences are stark and decisive.

Latency: The Edge Advantage

Latency is a silent killer for many AI applications. Imagine an autonomous vehicle navigating a busy city street or an industrial robot performing precision tasks on a factory floor. In these scenarios, even a delay of a few milliseconds can mean the difference between smooth operation and catastrophic failure.

Choosing the edge as an inferencing location is an excellent way to reduce latency because processing happens directly on AI-enabled devices. The alternative is sending the input to the cloud for processing, which takes longer. In practical terms, edge AI can deliver response times measured in milliseconds, while cloud AI typically requires hundreds of milliseconds or more due to network transmission delays.

Computational Power: Cloud’s Domain

When it comes to raw computational power, Cloud AI outshines Edge AI. Cloud-based solutions have access to virtually unlimited computing resources, including powerful GPUs and TPUs, making them ideal for tasks that require heavy computation, such as deep learning model training.

Edge AI, while offering lower latency, is constrained by the hardware on the edge devices. These devices, such as smartphones or IoT sensors, generally have limited processing capabilities and power efficiency. However, the gap is narrowing rapidly as edge hardware becomes more sophisticated.

The Business Impact: Cost, Security, and Scalability

Understanding the financial implications requires looking beyond the obvious infrastructure costs. Transferring large amounts of data to the cloud for processing can get expensive. When budgets are a concern for those with decision-making capabilities, edge computing can be more cost-effective because processing occurs locally.

Recent research reveals a concerning trend: organizations waste 32% of what they spend on their cloud services. While this doesn’t automatically disqualify cloud AI, it underscores the importance of careful cost analysis.

Security Considerations

Data security presents a complex equation. The cloud’s processing location is more distant than edge devices, but that also means cybersecurity issues might arise during transit or because of a provider’s negligence. However, the local processing of AI data on edge devices gives professionals more control and oversight, letting them keep security tight.

The privacy implications are particularly significant. Privacy is another critical benefit, as processing data closer to the source minimizes the amount of sensitive information transmitted over networks.

Check out this fascinating article: 7 Best AI Coding Tools That Boost Productivity

Industry Applications: Where Each Approach Shines

Different industries are adopting these technologies based on their specific requirements. Let me break down the real-world applications:

Edge AI Success Stories

The automotive and transportation industry is anticipated to reinforce the segment growth owing to the soaring implementation of edge AI solutions in autonomous vehicles which significantly rely on real-time information analysis. WeRide and Lenovo Vehicle Computing established a strategic alliance to develop Level 4 autonomous driving solutions customized for commercial use in March 2024.

Manufacturing represents another edge AI stronghold. In manufacturing and industrial settings, edge AI is revolutionizing predictive maintenance. By processing data directly on machines or nearby edge devices, potential issues can be detected and addressed in real-time, minimizing downtime and improving overall equipment effectiveness.

Cloud AI Dominance

Cloud AI excels in scenarios requiring massive computational resources. The IT & Telecom segment dominates the market, with a revenue share of 21.1% in 2024. The proliferation of connected IoT devices and the transition of telecom networks to 5G created new opportunities and challenges for telecom operators.

Healthcare analytics, large-scale data processing, and complex AI model training remain cloud AI’s strongest use cases, where the need for enormous computational resources outweighs latency concerns.

The Emerging Hybrid Reality

As Daryl Plummer, Distinguished VP Analyst and Gartner Fellow, noted: “It is clear that no matter where we go, we cannot avoid the impact of AI. AI is evolving as human use of AI evolves. Before we reach the point where humans can no longer keep up, we must embrace how much better AI can make us.”

The future isn’t about choosing sides—it’s about intelligent orchestration. The convergence of edge and cloud computing is leading to a powerful hybrid approach that allows companies to leverage the strengths of both paradigms.

Platforms such as AWS Greengrass and Microsoft Azure IoT Edge enable companies to run AI models on edge devices, those enhanced and upgraded in remote settings, and find new insights extracted back to the cloud. This hybrid approach optimizes workload distribution, sending time-critical tasks to the edge while offloading complex computations to the cloud.

Market Momentum Behind Hybrid Approaches

The hybrid cloud market in 2024 is estimated to be around $130.87 billion and will skyrocket to $329.72 billion in the period of 2030, increasing at a steady compound annual growth rate of 16.65%. This growth reflects businesses recognizing that the future lies in intelligent workload distribution rather than all-or-nothing approaches.

Check out this fascinating article: AI Agents 2.0: Building Autonomous Teams That Work While You Sleep

Future-Proofing Your AI Strategy

Looking ahead, several technological developments will reshape this landscape further. The neuromorphic computing market has expanded rapidly, increasing from $1.44 billion in 2024 to $1.81 billion in 2025, representing a CAGR of 25.7%. These brain-inspired chips promise even more efficient edge processing.

The global TinyML market is projected to reach $10.80 billion by 2030, growing at an impressive CAGR of 24.8% from 2024, enabling sophisticated AI models to run on increasingly resource-constrained devices.

Meanwhile, Gartner predicts significant changes in AI governance and management. Gene Alvarez, Distinguished VP Analyst with Gartner, emphasizes that by 2028, at least 15% of day-to-day work decisions will be made autonomously through agentic AI, up from 0% in 2024.

However, John-David Lovelock, Distinguished VP Analyst at Gartner, warns: “Expectations for GenAI’s capabilities are declining due to high failure rates in initial proof-of-concept work and dissatisfaction with current GenAI results”. This suggests organizations must be strategic rather than reactive in their AI deployment decisions.

Source

- ResearchAndMarkets – Hybrid Cloud Market Analysis 2024-2030

- Fortune Business Insights – Edge AI Market Report 2023-2032

- Gartner – AI Spending Forecast and Strategic Predictions 2025

- Grand View Research – Edge AI Industry Analysis 2024-2030

- NVIDIA Corporation – Jetson Nano Technical Specifications

- Google Cloud – Edge TPU Performance Documentation

- IBM Research – Edge AI vs Cloud AI Comparative Analysis

- Omdia Technology Analysis – AI Edge Platforms 2024

- TECHi Industry Report – Edge vs Cloud Computing 2025

- Various industry analyst reports and expert interviews conducted in 2024-2025

Ready to apply this to your business?

Let's Talk Strategy →