TL;DR (Oct 2025): Hands-on review of ElevenLabs for Business Voice across brand voice control, audio quality, licensing, pricing & team workflows. (Last updated: Oct 2025)

- Licensing: Free is non-commercial and requires attribution; all paid tiers include a commercial license.

- Cloning & consent: Professional Voice Cloning = your own verified voice only. Instant cloning requires rights/explicit consent. Deceptive impersonation is prohibited. See policy & checklist.

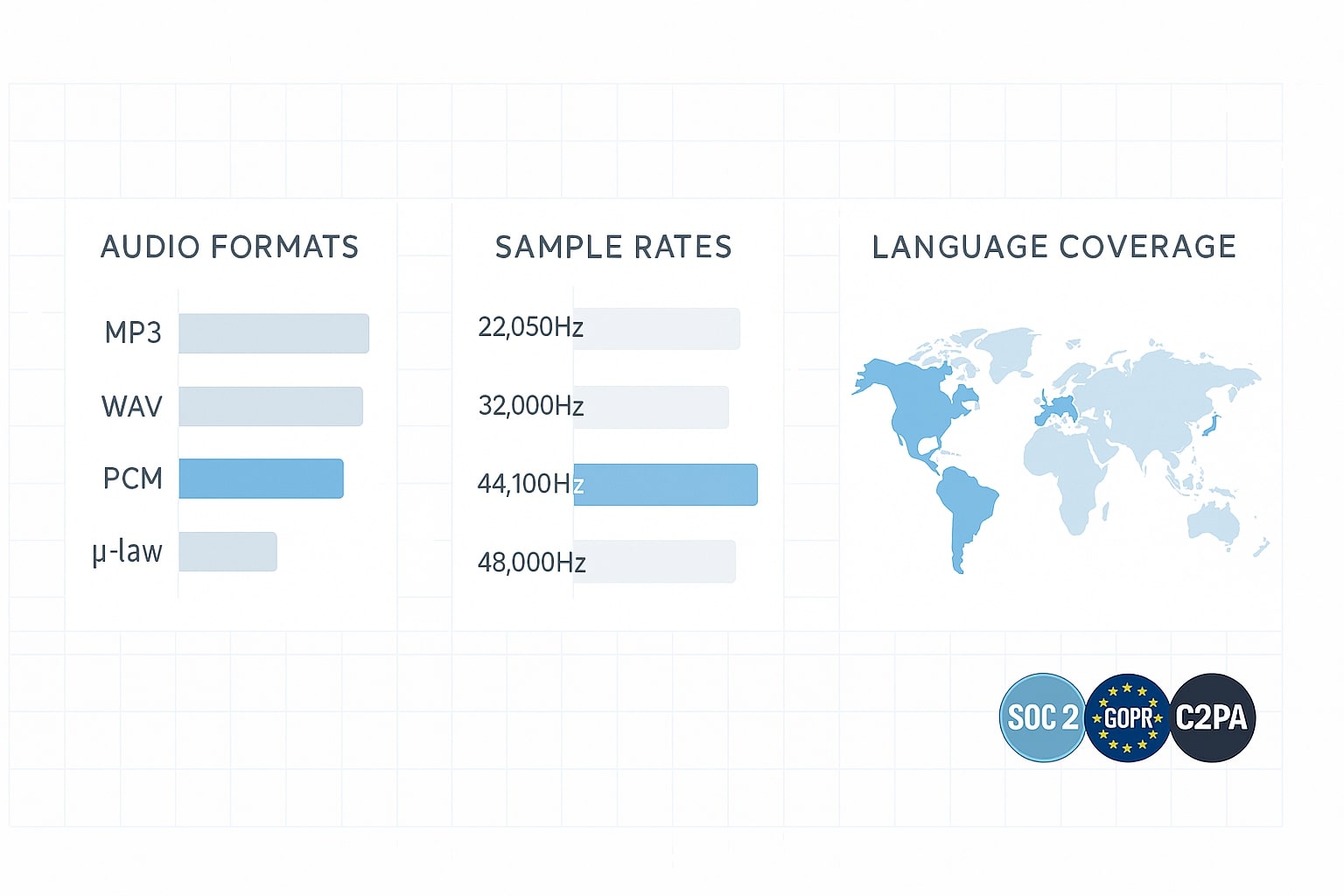

- Audio & models: Up to 44.1 kHz WAV (16-bit) on higher tiers and 192 kbps MP3 on Creator+; Eleven v3 covers 70+ languages.

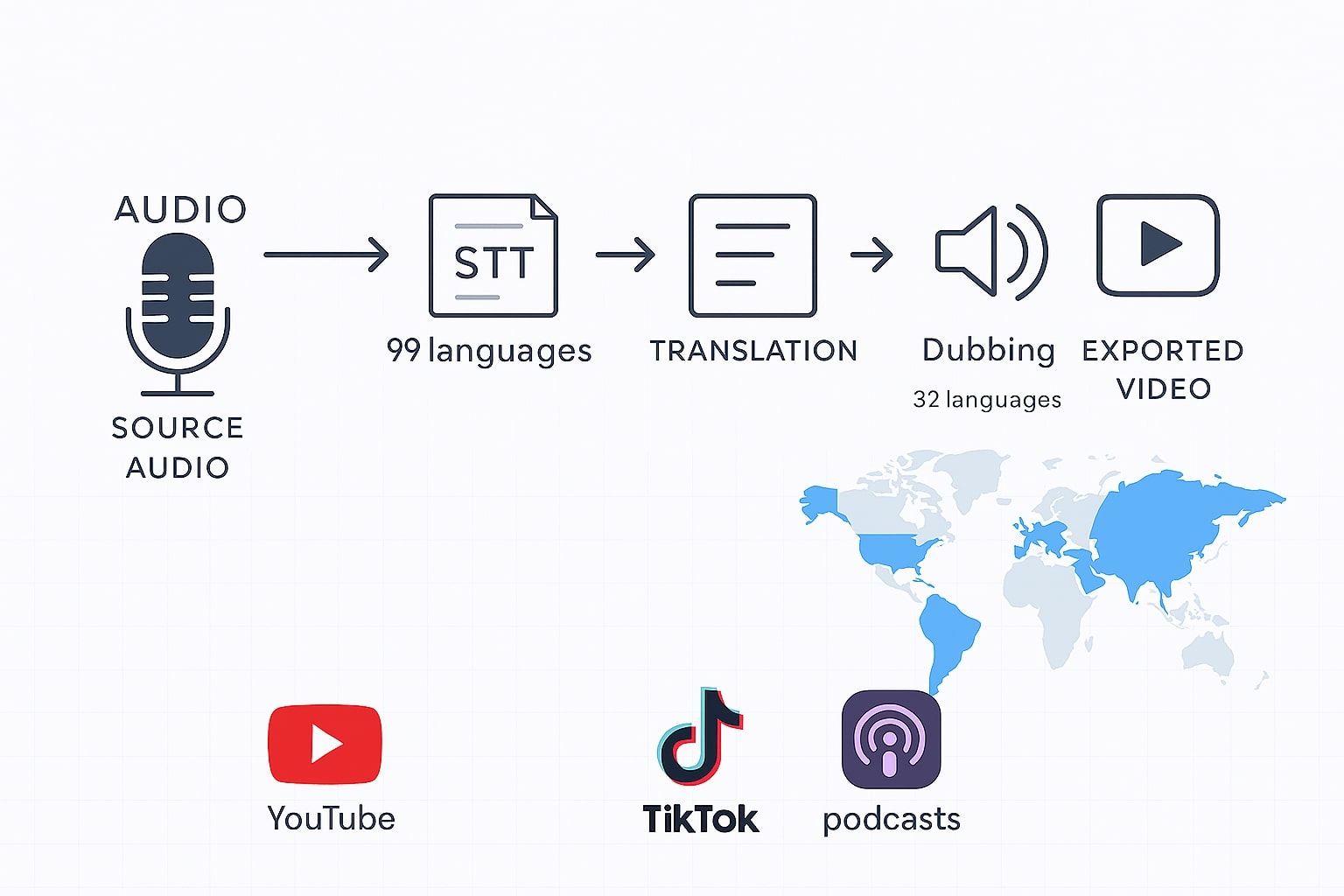

- Dubbing & STT: Native dubbing across ~32 languages; Speech-to-Text supports ~99 languages for multilingual workflows.

- Pricing (Oct 2025): Free (10k credits), Starter $5 (30k), Creator $22 (100k, PVC + 192 kbps), Pro $99 (500k, 44.1 kHz via API), Scale $330 (2M + seats), Business $1,320 (11M + seats). Usage-based overage available.

Why ElevenLabs Matters for Brand Voice in 2025

Margabagus.com – The AI-audio market matured fast, and ElevenLabs moved from creator tooling to enterprise features without losing speed. In January 2025 it raised a large round at a multi-billion valuation and added products beyond TTS, including speech-to-text, dubbing, and an enterprise stack designed for teams and compliance. For you, that means a credible vendor for production work rather than a “toy” tool, useful if marketing and support now rely on consistent, on-brand voice across channels.[1][4][14]

Brand Voice Controls: Cloning, Libraries, and Guardrails

Getting a consistent brand voice is not just about cloning; it’s about controls and policy. ElevenLabs offers a Voice Library (ready-made voices for brand use) and Professional Voice Cloning (PVC) when you need your own signature voice. PVC introduces verification steps before training, and ElevenLabs’ help docs state you can only create a PVC of your own voice; cloning someone else’s voice—even with consent—is not permitted under PVC. That’s a strong guardrail against misuse.[12][13]

The Privacy Policy explains consent/identity checks (e.g., verifying that a recording truly belongs to the speaker), and the Prohibited Use Policy bans deceptive impersonation, harassment, and political-process abuse. If you operate in regulated markets or handle public-facing campaigns, these controls reduce risk at procurement time and during audits.[11][3]

Finally, ElevenLabs provides an AI Speech Classifier to detect whether an audio clip likely came from its models (note the limitation on v3). This doesn’t replace governance, but it adds traceability if your workflow requires content provenance.[10]

Check out this fascinating article: How to Make Your Brand Visible in AI Chatbots and Generative Search

Audio Quality in Practice: Models, Formats, and Languages

When I say “quality,” I mean both timbre realism and technical fidelity. On higher-tier plans, output quality reaches 16-bit, 44.1 kHz WAV or 192 kbps MP3 (Studio/API), while lower tiers cap at 128 kbps MP3. If your channels include broadcast, audio ads, or premium podcasts, those higher tiers matter; if you publish mainly for web and social, 128 kbps MP3 can still be sufficient.[6][5]

Model choice also affects the result. Eleven v3 focuses on expressive control and claims 70+ languages, while Multilingual v2 is the steady, lifelike workhorse; Flash targets low latency for conversational UX. For multilingual brands, v3’s reach and Multilingual v2’s stability cover most use cases; for chat/agent flows, Flash’s snappier response improves UX.[15][16]

From a delivery standpoint, the API supports multiple formats (MP3/PCM/μ-law/A-law/Opus) and rates (22.05–44.1 kHz, with PCM 44.1 kHz requiring Pro+). If you rely on telephony, μ-law/A-law are there; if you master for music-like polish, use WAV at 44.1 kHz.[5][9]

Dubbing, Speech-to-Text, and Multilingual Reach

For global content, Dubbing translates and recreates delivery in about 32 languages, preserving timing and tone, useful for product explainers, webinars, and UGC. In parallel, the Speech-to-Text API (launched 25 Feb 2025) supports ~99 languages, so you can transcribe source tracks and then re-voice with consistent tone. The stack is practical for “record once, publish everywhere.”[7][8]

Ecosystem signal also matters. Spotify’s audiobook pipeline now openly accepts AI-narrated titles and highlighted ElevenLabs as a production option, pushing synthetic narration closer to mainstream distribution. If long-form audio sits in your roadmap, that’s a material indicator.[2]

Pricing and Licensing: What Businesses Actually Pay

This is where most teams decide. Free provides ~10 minutes/month and API access, but it requires attribution and isn’t licensed for commercial use. Starter ($5/mo) adds a commercial license and Instant Voice Cloning; Creator ($22/mo; $11 first month) unlocks Professional Voice Cloning and 192 kbps quality via API; Pro ($99/mo) enables 44.1 kHz PCM via API. Scale ($330/mo) and Business ($1,320/mo) add seats, concurrency, and extras like multiple PVCs and low-latency pricing; Enterprise is custom with SSO, SLAs/DPA, HIPAA BAAs and managed services. Credits map to minutes, with usage-based billing for overages. For brand programs, Creator+ is often the floor; Pro or Scale is safer for teams.[1]

Compliance, Consent, and Real-World Risk

If you operate in the U.S., remember that BIPA (Illinois) treats voiceprints as biometric identifiers and requires written (including electronic) consent, with 2024 amendments limiting per-incident damages but keeping consent central. ElevenLabs’ policies lean the same way: explicit consent, verification, and bans on deceptive impersonation. In practice, your counsel should align internal consent flows with your cloning setup and keep audit trails (voice rights, intended uses, revocation).[17][18][3][11]

A last note on bias and provenance: academic work in 2025 still finds variability across accents and pitches, and ElevenLabs’ classifier notes limited reliability on v3 audio. Run brand-fit tests with your audience segments and keep disclosure metadata where appropriate.[19][10]

Voice Cloning Consent Policy (2025): What businesses must collect & document

Short version: Professional Voice Cloning allows cloning your own voice only (identity verification required). Instant/standard cloning flows require you to confirm you have the right and consent to use any voice. Keep explicit, written consent on file and follow prohibited-use and safety rules before commercial deployment. (Last checked: Oct 2025)

What the rules say (plain English)

- Professional Voice Cloning (PVC): clone only your own voice; even with consent, you may not clone someone else’s voice. Identity verification is part of the PVC flow.

- Instant/standard cloning: you must confirm you have the right and consent to use the voice you upload or reference.

- Privacy & consent handling: when a third party authorizes use of their voice, the platform may process recordings to verify it’s their voice and that they consented.

- Safety & prohibited uses: no deceptive impersonation or illegal/harmful use; follow the provider’s Prohibited Use Policy and Safety guidelines.

What a valid consent should include

- Identity proof of the voice owner (full name, email, optional selfie or short verification phrase).

- Explicit consent statement authorizing capture, cloning, synthesis, and commercial use of their voice.

- Scope (projects/channels), territory, duration, and revocation procedure.

- Restrictions (e.g., no political messages, no endorsements, no obscene/illegal content).

- Data handling: storage location, retention, deletion on request.

- Compensation/consideration (if applicable).

- Signature & timestamp (e-signature acceptable) + file attachments (raw sample, ID where lawful).

Template — short consent form (copy/paste)

VOICE CLONING CONSENT (Short Form) – Oct 2025

I, [Full Name] ("Talent"), hereby grant [Company] the right to capture, model, and synthesize my voice

for AI voice generation ("AI Voice") using ElevenLabs or similar tools. I confirm that this is my voice

and I am authorized to grant this consent.

Scope: [Project/Channel], Territory: [Global/Region], Duration: [X months/years].

Allowed Use: Marketing, support, training, [other]. No political messages, endorsements, obscene or illegal content.

Attribution: [Required/Not required]. Compensation: [Amount/None].

Revocation: I may revoke further use with written notice to [email]. Company will cease new use within [X] days.

Data handling: Company will store recordings/models securely, retain for [X], and delete upon verified request,

subject to legal obligations.

Signed: ____________________ Date: ______________ Email: ____________________

Attachments (optional): verification phrase audio, selfie/ID (as permitted by law).

Template — enterprise consent & verification flow

- Invite Talent via secure form (collect identity + consent + verification phrase).

- Verify voice ownership (short scripted phrase) and match to consent record.

- Record scope (projects, channels, duration, restrictions) in your consent registry.

- Create/label the voice in platform; restrict access (role-based) and log usage.

- Pre-publish check: prohibited-use screen (no impersonation, illegal content), brand/legal review.

- Retention & revocation: document purge steps if Talent revokes consent; update downstream assets.

Tip: store consent PDFs, audio proofs, and usage logs in one workspace (e.g., DPA folder) and audit quarterly.

Real-World Sampling: How Brands Use ElevenLabs for Business Voice

Before I suggest a playbook, I want you to see how teams actually deploy ElevenLabs at scale. The pattern is consistent across media, sports, retail, and B2B training. Brands start with a defined voice and consent model, pick latency/quality by use case, and wire the stack into existing production—then they measure lift in reach or efficiency. The examples below show what that looks like today, with sources you can check.

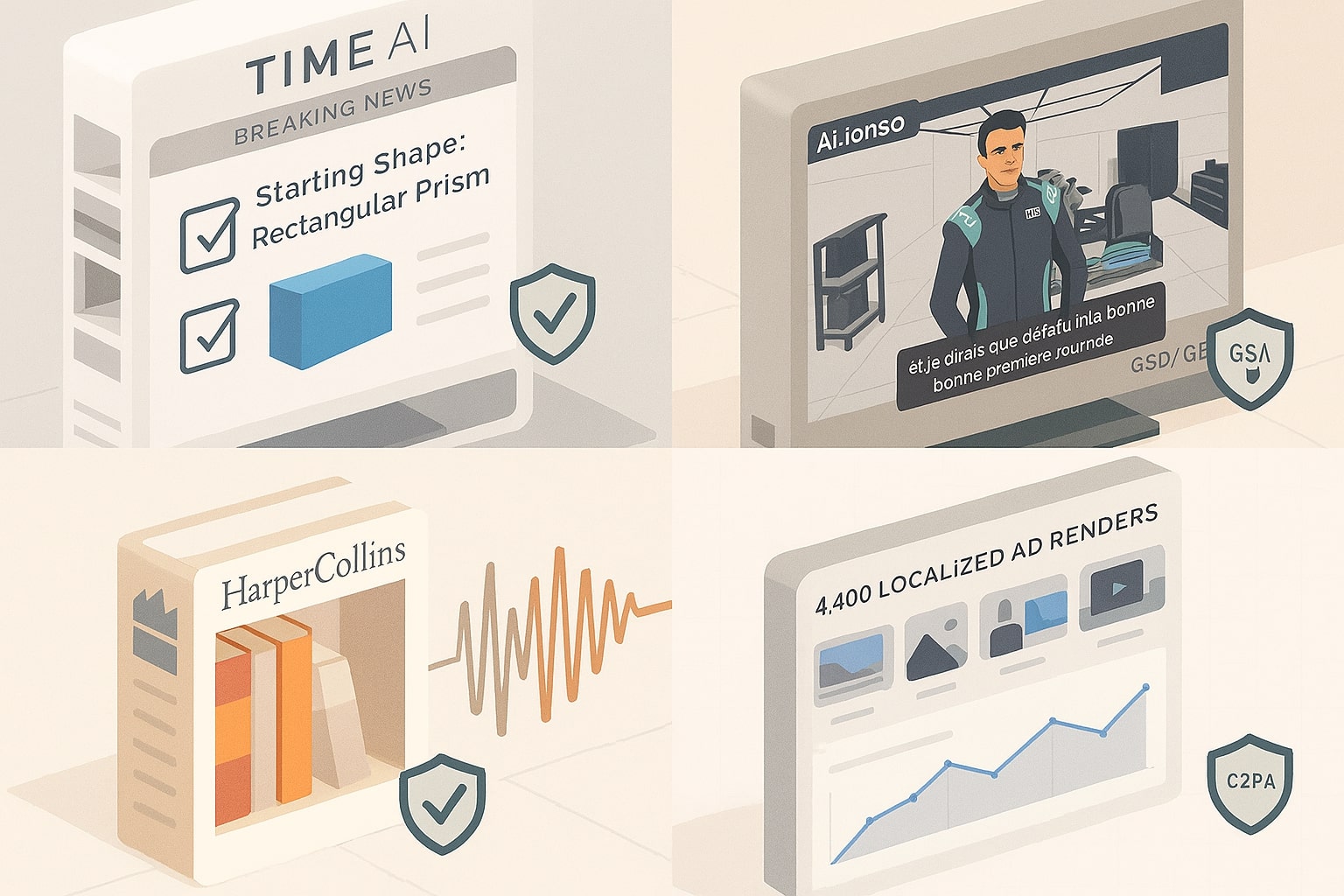

TIME (media): conversational journalism in production.

TIME launched TIME AI, letting readers speak to an AI voice agent with instant audio, translation, and summarization layered on top of TIME’s reporting. ElevenLabs powers the conversational voice layer; links on TIME’s Person of the Year pages demonstrate the experience in the wild. This is a strong reference for publishers who want on-brand, low-latency voice without rebuilding their CMS.[20]

Aston Martin Aramco F1 Team (sports & fandom): the “Ai.lonso” voice.

The team rolled out Ai.lonso, which reads and translates site content in Fernando Alonso’s AI voice in English, Spanish, and French. It’s positioned as accessibility plus personalization, useful if your fan content spans multiple locales but you still want a single, recognizable brand voice.[21]

HarperCollins (publishing): backlist economics unlocked.

HarperCollins uses ElevenLabs to create audio versions of deep backlist titles, projects that wouldn’t be viable with traditional production. For rights-holders with large catalogs, this shows where AI narration complements, not replaces, human-narrated flagships.[22]

Storytel (audiobooks): personalization at platform scale.

A 2023 partnership previewed Storytel’s VoiceSwitcher for personalized listening, and today Storytel lists “Eleven Labs AI” among narrators, signaling durable supply-side adoption. If your roadmap includes user-level personalization, this is the platform pattern to study.[23][24]

Retail (performance marketing): 4,400 localized ads in 48 hours.

A leading U.S. eyewear retailer and agency used AudioStack + ElevenLabs to auto-generate 4,400 hyperlocalized audio ads in two days, driving 40,186 store visits attributed to the campaign across Spotify, iHeart, and Pandora. This is the template if you need dynamic offers, many locations, and broadcast-ready quality fast.[26]

B2B training (sales enablement): measurable lift, not just novelty.

Eagr.ai swapped awkward role-plays for lifelike, low-latency practice sessions powered by ElevenLabs agents; customers reported an 18% average win-rate increase and 30% performance lift among top users within six weeks. If you run enablement at scale, this shows a credible ROI path.[27]

Distribution signal: Spotify accepts ElevenLabs-narrated audiobooks.

As of February 2025, Spotify’s distribution accepts ElevenLabs-narrated titles (via Findaway Voices), citing 29 languages; mainstream distribution reduces channel risk for synthetic narration. Use this to justify procurement if your stakeholders worry about platform policies.[25][2]

A Simulated Pilot You Can Run in 30 Days (Mid-Market E-Commerce)

Here’s a conservative blueprint I’d use if you sell nationally with 200+ SKUs and want consistent, on-brand voice across ads and product videos.

- Voice & consent. Record your brand spokesperson (or approved VO), capture written consent, and create a Professional Voice Clone for controlled, compliant reuse. Document intended uses and retention.[13][11]

- Model & latency. Use Eleven v3 for narrated videos and product explainers; reserve Flash for conversational widgets and IVR where snappy turn-taking matters.[15][16]

- Formats & channels. Export 44.1 kHz WAV for broadcast/YouTube masters and 192 kbps MP3 for social cut-downs; keep μ-law/A-law for telephony trees.[5][6][9]

- Minutes & plan. Start pilots on Creator (PVC + 192 kbps) then move to Pro for PCM workflows; if you’re localizing to 5+ regions, model overages or jump to Scale for team concurrency.[1]

- Localization. For each hero SKU video, transcribe → translate → dub into 3–5 priority languages; re-use the same brand voice for consistency across regions.[7][8]

- Measurement. Tag campaigns with voice-version metadata and A/B vs your previous VO baseline. If you’re omnichannel, add a dynamic-audio test for localized offers (the Audiostack pattern above).[26]

Where ElevenLabs Shines for Marketers

Speed to production. Choose a stock brand voice or spin up PVC, then ship content in hours, not weeks. Quality at scale. 44.1 kHz WAV or 192 kbps MP3 on higher tiers gives you ad-grade polish. Multilingual reach. v3’s 70+ languages plus dubbing/STT cover most international rollouts with consistent tone. Governance posture. Clear prohibitions and verification steps support internal risk reviews.[6][15][7][8][3]

What I’d Watch Before a Full Rollout

-

Rights & consent ops. Ensure you own the voice you clone and document consent; PVC won’t allow cloning others even with consent. 2) Edge-case accents. Pilot with your target locales; use multiple voices if you detect perception gaps. 3) Latency selection. For live agents or IVR, test Flash; for storytelling, try v3 and compare with Multilingual v2. 4) Budget realism. Minutes go fast in video pipelines; model overages versus Scale/Business tiers early.[13][19][16][1]

Pros and Cons for ElevenLabs for Business Voice

Here’s the on-page summary used for rich results and aligned with our review.

Pros

- High-fidelity output on higher tiers (up to 44.1 kHz WAV / 192 kbps MP3) [6][5]

- Professional Voice Cloning with consent and identity verification [13][11]

- Multilingual reach: v3 (70+ languages), Dubbing (~32), STT (~99) [15][7][8]

- Enterprise posture and clear prohibited-use policy [4][3]

- Fast time-to-production with stock voices and PVC [12]

Cons

- Free tier is non-commercial, paid plans grant commercial rights [1]

- AI Speech Classifier limited reliability for some v3 audio [10]

- Accent/bias variability persists, run audience tests [19]

- Minutes/credits can spike costs at scale [1]

- PVC cannot clone someone else’s voice, even with consent [13]

Check out this fascinating article: The Best AI Tools of 2025: Hands-On Review and Use Cases

A Practical Verdict You Can Act On

If you need the best AI voice for marketing that balances quality, policy guardrails, and enterprise readiness, ElevenLabs for Business Voice is one of the safest bets today. I’d start at Creator for pilots (PVC + 192 kbps), move to Pro for API PCM workflows, and consider Scale when multiple teams and locales join. If your strategy hinges on conversational agents, factor Flash’s latency into your design. Drop your questions or share your brand-voice hurdles in the comments, I’ll help you pressure-test the setup with your stack and channels.[1]

References

- ElevenLabs — Pricing

- The Verge — Spotify & AI-narrated audiobooks

- ElevenLabs — Prohibited Use Policy

- ElevenLabs — Enterprise overview

- ElevenLabs Docs — TTS capabilities & formats

- ElevenLabs Docs — Studio quality by plan

- ElevenLabs Docs — Dubbing (languages & behavior)

- ElevenLabs Docs — 2025-02-25 STT launch (99 languages)

- ElevenLabs Docs — API output formats & plan gates

- ElevenLabs — AI Speech Classifier

- ElevenLabs — Privacy Policy (voice verification/consent)

- ElevenLabs — Voices & cloning overview

- ElevenLabs Help — PVC: your own voice only

- Reuters — Funding & valuation (Jan 2025)

- ElevenLabs — Eleven v3 (70+ languages)

- ElevenLabs — Homepage (model/latency highlights)

- Illinois BIPA — Statute (voiceprints as biometric identifiers)

- Reuters — 2024 BIPA amendments overview

- arXiv (2025) — Accent bias in synthetic voices (Speechify & ElevenLabs)

- TIME Brings Conversational AI to Journalism — ElevenLabs

- Ai.lonso with Aston Martin Aramco F1 Team — ElevenLabs

- HarperCollins Partners with ElevenLabs — Customer Story

- Storytel x ElevenLabs — Strategic Partnership

- Storytel — Eleven Labs AI Narrator Listing

- Spotify Newsroom — Accepting ElevenLabs-narrated Audiobooks

- AudioStack + ElevenLabs — 4,400 localized ads case study

- Eagr.ai — Sales Training Lift with Conversational Agents

Ready to apply this to your business?

Let's Talk Strategy →