Table of Contents

- Why everyone is suddenly asking “Is ElevenLabs legal?”

- 1 AI voices now sound too real

- 2 Deepfake & transparency rules are kicking in

- 3 ElevenLabs is moving into high-profile, commercial use

- What ElevenLabs’ own policies actually say

- 1 Voice Data and consent verification

- 2 Prohibited Use Policy: the red lines

- 3 Voice Library & Iconic Voices: consent as business model

- 4 ElevenLabs’ own content guidelines

- The legal landscape in 2025: what actually matters

- 1 Rights of publicity & privacy

- 2 Deepfake and transparency rules

- 3 Fraud, robocalls, and impersonation

- What counts as valid consent in practice?

- 2 Special cases: minors, employees, and sensitive contexts

- High-risk scenarios you should avoid (or lawyer-up for)

- 1 Cloning someone without consent

- 2 Celebrity voices outside official channels

- 3 Politics and elections

- 4 Robocalls, lead-gen, and outbound sales

- A practical safe-use checklist for ElevenLabs (2025–2026)

- 1 Ownership & rights

- 2 Compliance & disclosure

- 3 Technical & safety practices

- Example workflows: how different users can stay safe

- 1 For content creators & influencers

- 3 For developers and product teams

- So… is ElevenLabs legal to use in 2025?

AI voice is no longer a novelty in 2025. Brands are launching AI-narrated podcasts, influencers are scaling content in multiple languages, and Hollywood names like Michael Caine and Matthew McConaughey are officially licensing AI versions of their voices through ElevenLabs’ new Iconic Voice Marketplace.[1]

Every time a new project starts, the same question comes up:

“Is using ElevenLabs actually legal – and what kind of consent do I really need?”

This article walks through what ElevenLabs itself expects from you, how regulations are evolving in 2025, and a practical checklist you can apply before you hit “Generate”. It’s written for creators, agencies, developers, and businesses who want to use AI voice safely, not fight with regulators later.

Important: This article is for general information only and is not legal advice. For high-stakes decisions, talk to a lawyer in your jurisdiction.

1. Why everyone is suddenly asking “Is ElevenLabs legal?”

There are three big forces behind the anxiety.

1.1 AI voices now sound too real

ElevenLabs uses deep learning to produce highly realistic speech and dubbing across dozens of languages.[2] That’s great for accessibility and localization, but it also makes it easy to impersonate someone with just a short sample of their voice.

In 2024–2025, regulators and consumer-protection agencies reported a spike in AI-voice scams and fraud: robocalls mimicking politicians, CEOs, or family members to trick people into paying money or revealing data.[3]

1.2 Deepfake & transparency rules are kicking in

The EU AI Act, which entered into force in 2024 and is rolling out through 2025–2027, explicitly covers deepfakes and AI-generated media.[4] It requires that AI-generated or substantially manipulated images, audio, and video be clearly labeled as such, and that users know when they’re interacting with AI.[4]

Other governments are following: Spain approved heavy fines for not labelling AI-generated content, and India has proposed rules that would force platforms to visibly label AI audio and deepfakes.[5]

1.3 ElevenLabs is moving into high-profile, commercial use

ElevenLabs doesn’t just provide tools; it now runs a Voice Library with revenue share for creators and an Iconic Voice Marketplace that lets brands license celebrity and historical voices on a consent-based, performer-first model.[1][6]

When big money and famous names are involved, questions about legality, contracts, and ethics inevitably follow.

For a discussion of Voice Cloning read: ElevenLabs Voice Cloning Consent Policy (2025): Legal, Ethical, and Product Implications

Person reviewing an online use policy and printed legal notes about AI voice cloning consent

2. What ElevenLabs’ own policies actually say

Before we look at governments, start with the contract you already have: ElevenLabs’ terms, privacy policy, and use policy.

2.1 Voice Data and consent verification

In its CCPA notice and privacy policy, updated December 2025, ElevenLabs says it collects audio recordings and “Voice Data” you choose to share, and may ask for additional recordings to verify that a voice is genuinely yours.[7]

Crucially, they mention using this verification when another user wants to use your voice with your consent – meaning the platform is designed around a consent-first model, not anonymous voice scraping.[7]

2.2 Prohibited Use Policy: the red lines

The Prohibited Use Policy (updated 3 September 2025) draws sharp lines around impersonation and abuse.[8] It forbids, among other things:

- Creating or using audio specifically to replicate another person’s voice without consent or legal right,

- Using AI voices in ways that harass or sexually exploit someone,

- Generating audio that deceives others about whether it is AI-generated,

- Political deepfakes: impersonating candidates or officials, spreading misleading election content, or engaging in AI-driven voter suppression.

Violating these policies can get your account suspended or terminated and may be used as evidence of negligence or bad faith if things escalate legally.

2.3 Voice Library & Iconic Voices: consent as business model

ElevenLabs’ Voice Library lets creators upload voices and earn usage-based payouts, with official docs noting that the company has already paid over $1M to creators at a base rate tied to characters generated.[6]

The Iconic Voice Marketplace goes a step further: brands can license AI-generated voices of iconic figures, with ElevenLabs positioning it as a “consent-based, performer-first” system where rights holders approve scripts and uses before audio is delivered.[1][6]

This is important: it shows where the industry is heading. If you don’t have a similarly clear consent trail for your own projects, you’re swimming against the current.

2.4 ElevenLabs’ own content guidelines

On its blog, ElevenLabs repeatedly reminds publishers to avoid misleading, harmful, or illegal content, and to follow platform guidelines when publishing AI-generated material.[9] In a piece on AI-generated podcasts, they state that voice-changer and AI-voice tools are generally legal, but impersonating others without consent can be unethical or even illegal depending on context.[9]

Put simply:

ElevenLabs assumes you have consent and will follow the law.

If you don’t, that’s on you – and they’ve put that in writing.[8]

Judge’s gavel and legal scales in front of a screen with abstract audio waveforms and EU-style flags representing AI voice regulation

3. The legal landscape in 2025: what actually matters

Laws differ by country, but there are a few recurring themes.

3.1 Rights of publicity & privacy

Many jurisdictions recognize some version of a “right of publicity” or personality rights: the right to control commercial use of your name, image, and likeness, which increasingly includes your voice. Even where the law isn’t fully updated, courts are starting to treat unauthorized AI voice cloning as a potential violation of these rights.[10]

If you use ElevenLabs to make it sound like a real person:

- without their permission,

- in a commercial setting (ads, branded content, monetized courses, etc.),

you’re likely stepping into a high-risk legal zone, especially if the person is famous or readily identifiable.

3.2 Deepfake and transparency rules

The EU AI Act and related initiatives do a few key things:[4]

- Define deepfakes as AI-generated or manipulated content that resembles real people or events and could appear authentic.[4]

- Require that AI-generated or altered audio and video be clearly labeled as such (Article 50).

- Prepare machine-readable standards and codes of practice for marking AI-generated content, with full deepfake labeling obligations due by August 2026.[11]

Several countries (Spain, India, South Korea) are already moving ahead with national rules that mandate AI labels and impose fines for undisclosed synthetic media.[5][12]

If you publish AI-generated voices to EU or global audiences and do nothing to label them, you’re likely to fall out of compliance as these rules mature.

3.3 Fraud, robocalls, and impersonation

In the US, regulators like the FTC and FCC are focusing on AI-enabled impersonation and robocalls:

- FTC voice cloning initiatives highlight harms from AI impersonating family members, businesses, or public figures.[13]

- The FCC has clarified that AI-generated voices in robocalls fall under the TCPA, with new disclosure and consent proposals specifically targeting AI calls.[14]

So if you’re using ElevenLabs for automated outbound calls or sales outreach, you’re in a high-scrutiny category. The burden is on you to prove consent and proper disclosures.

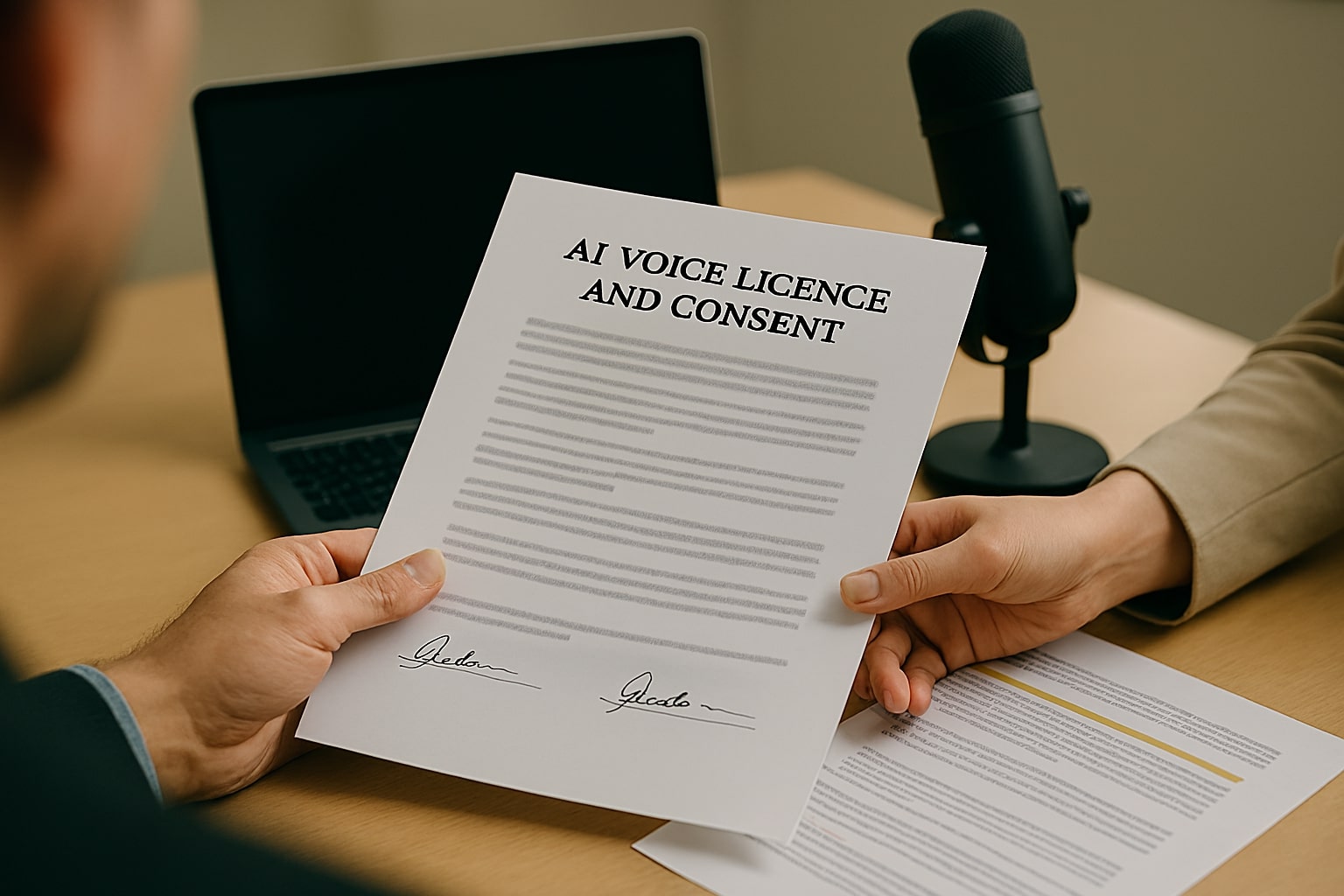

Two people exchanging a signed AI voice licence and consent contract with recording equipment in the background

4. What counts as valid consent in practice?

Let’s make this concrete. When you’re working with a real human’s voice, “consent” should be:

- Informed – they know it’s AI voice cloning, not just a standard recording.

- Specific – they understand what it will be used for (e.g. YouTube channel, brand ads, internal training).

- Documented – you can prove it, if challenged.

- Revocable (ideally) – there’s a clear process if they later change their mind, even if some uses can’t be undone.

- 4.1 Consent formats that actually hold up

In 2025, safe options typically include:

- Written contracts or consent forms

– a talent contract or model release that explicitly covers AI voice cloning, synthetic derivations, allowed media (ads, podcasts, apps), territories, duration, and revenue share (if any). - Recorded verbal consent

– an audio clip where the person states, “I agree that my voice may be cloned using ElevenLabs and used for [purpose], for [duration/territory], under the terms we discussed.”

This works best as backup to a written agreement, not a replacement. - Platform-level consent

– In Voice Library and Iconic Voice Marketplace, ElevenLabs manages the consent and licensing pipeline; rights holders approve scripts and uses before audio is generated.[1][6] - If your “consent” is basically “they once said on WhatsApp this was cool”, that’s weak. For low-stakes, non-commercial experiments it might be socially acceptable, but it will not age well as regulations tighten.

4.2 Special cases: minors, employees, and sensitive contexts

Be extra cautious when:

- working with minors (often requires parental/guardian consent and stricter conditions),

- using employee voices for internal AI assistants or IVR (clarify whether participation is voluntary and what happens if they leave),

- dealing with sensitive sectors like healthcare, finance, politics, or public safety.

In these scenarios, a formal review with legal/compliance teams is a smart move, not bureaucracy.

Also read the detailed discussion on How ElevenLabs Performs in Practice: A Deep Dive into Brand Voice, Quality, Licensing, and Pricing

Person in a dim office looking at a monitor with warning icons over an audio waveform representing risky AI voice use cases

5. High-risk scenarios you should avoid (or lawyer-up for)

You can think of these as “red zones” where the chance of legal and reputational blowback shoots up.

5.1 Cloning someone without consent

- Using a colleague’s or client’s voice as a joke.

- Cloning a public figure from podcast clips “just to see what happens”.

- Training an internal model on call-center recordings without explicit consent.

Even if you don’t publish the audio, you’re probably violating the platform’s terms and may be breaking privacy or data-protection law.[8][10]

5.2 Celebrity voices outside official channels

If you want to use a celebrity-sounding voice for a campaign:

- Doing it through Iconic Voice Marketplace with licenses and approvals is the “clean” path.[1][6]

- Training your own clone from YouTube interviews is a textbook example of what regulators and ElevenLabs are trying to stop.[8]

5.3 Politics and elections

ElevenLabs explicitly bans political impersonation and campaigning in its Prohibited Use Policy.[8] At the same time, the EU AI Act and several national laws treat election-related deepfakes as especially harmful.[4][10]

If your project touches elections, campaign messaging, or political advocacy, assume zero tolerance for synthetic impersonation.

5.4 Robocalls, lead-gen, and outbound sales

AI-generated voices in automated calls are under heavy scrutiny from regulators and telco providers.[13][14]

If you’re combining ElevenLabs with auto-dialers or agentic workflows:

- get explicit, recorded consent for AI calls,

- disclose that the caller is AI-generated,

- and talk to a lawyer before scaling.

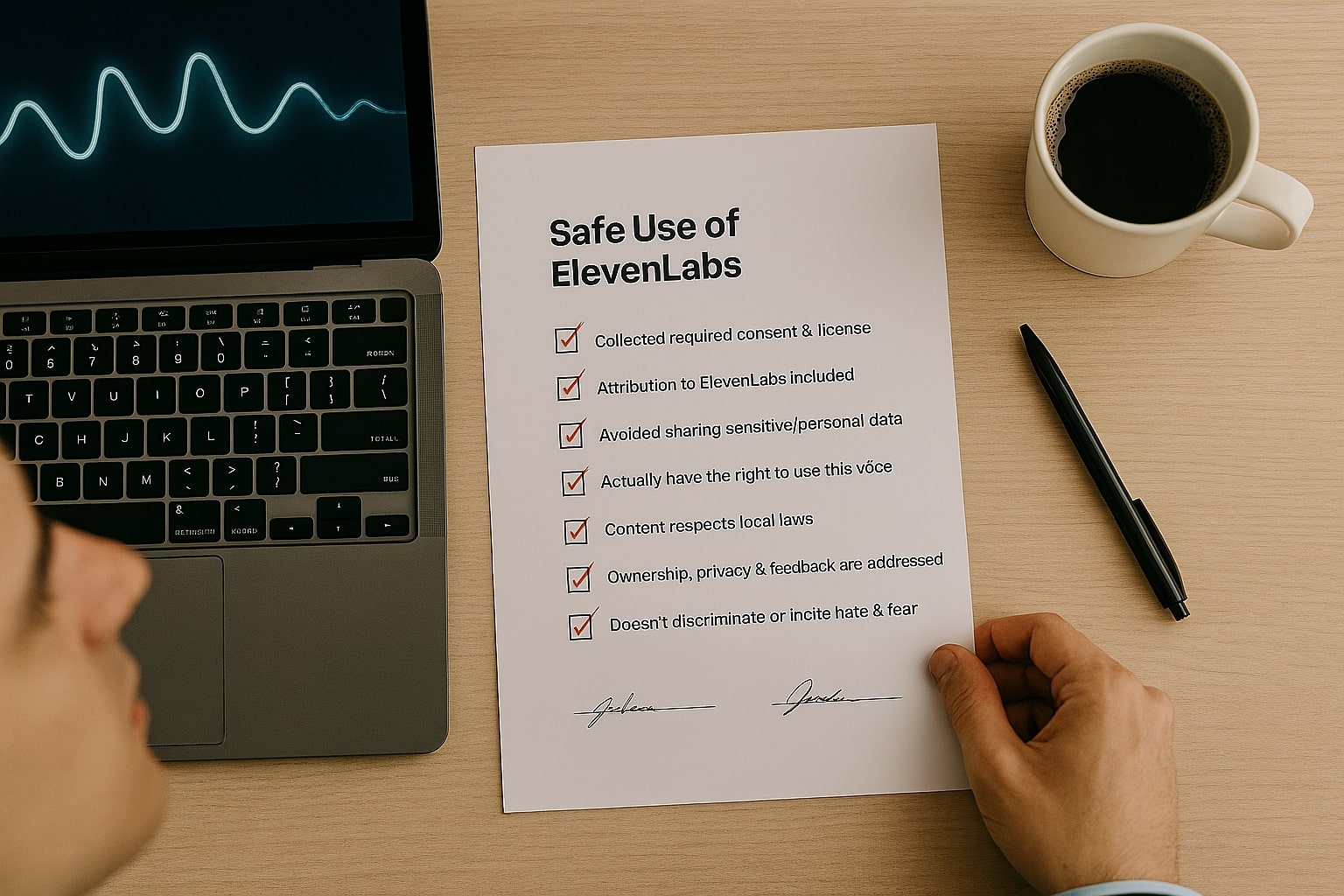

Desk with a safe-use checklist for ElevenLabs voice cloning next to a laptop and coffee mug

6. A practical safe-use checklist for ElevenLabs (2025–2026)

Use this as a pre-flight checklist before new projects:

6.1 Ownership & rights

- Whose voice is this?

- Myself / an employee / a contractor / a third-party talent / a celebrity?

- Do I have written permission to:

- clone their voice with AI?

- use it for commercial purposes?

- modify language, tone, or content (e.g., translations, dubbing)?

- Is the agreement clear on:

- where it will be used (platforms, territories),

- how long,

- and how they will be compensated (flat fee, revenue share, royalty)?

6.2 Compliance & disclosure

- Do I need to label this as AI-generated audio?

- If you’re in or targeting the EU, assume yes for anything that counts as a deepfake or synthetic voice.[4]

- Is my disclosure noticeable enough?

- For audio: a spoken line like “This narration was created using AI voice technology.”

- For pages or players: a visible badge or line in the description.

- Is this content political, financial, or otherwise sensitive?

- If yes, escalate to legal/compliance before publishing.

6.3 Technical & safety practices

- Are we following ElevenLabs’ Prohibited Use Policy?[8]

- No harassment, hate, sexual exploitation, or deceptive impersonation.

- Do we log what was generated and where it’s used?

- Keep basic logs for scripts, dates, and campaigns; this matters if complaints arise.

- Are we respecting takedown and revocation requests?

- If a voice owner asks to stop, have a process to sunset that voice from future projects, even if you can’t recall every past asset.

Also read the discussion about How to Get ElevenLabs Voice Cloning Consent, The Fastest Step by Step Guide for Creators

Team of a creator, agency strategist, and developer discussing an AI voice workflow on a large screen

7. Example workflows: how different users can stay safe

7.1 For content creators & influencers

- Use your own voice or official Voice Library voices for narration.

- Include a small banner or line in descriptions: “Narration generated with AI voice.”

- If you collaborate with friends or guests, send a simple voice-cloning consent form before recording.[6][9]

7.2 For agencies and brands

- Treat AI voice agreements like talent contracts, not side notes.

- For celebrity-style work, strongly prefer official marketplaces over DIY cloning.

- Add AI-use clauses into your MSA and privacy notice, especially if you process customer support calls or UGC.

7.3 For developers and product teams

- Bake consent capture & audit logs directly into your flows (e.g. a consent screen before users record voices).

- Use clear labels in UIs where users interact with AI voices, to align with upcoming AI transparency rules.[4][11]

- For enterprise clients, offer configuration options for watermarking or metadata tags that signal AI-generated audio.

Business owner closing a laptop next to legal documents and a checklist after reviewing ElevenLabs voice cloning rules

8. So… is ElevenLabs legal to use in 2025?

Short answer:

Yes, using ElevenLabs is generally legal – what gets you into trouble is how you use it.[9][10]

If you:

- clone only voices you have the right to use,

- get clear, documented consent,

- label AI-generated audio transparently,

- avoid political impersonation, scams, and harmful deepfakes,

- and follow ElevenLabs’ own safety rules,

you’re not just “trying to stay out of court” – you’re actively building a brand that respects the people whose voices make your content possible.

If the stakes are high (large ad spend, celebrity campaigns, regulated industries), treat this article as a baseline checklist, then sit down with a lawyer who understands AI, IP, and your target markets.

References

- ElevenLabs — Iconic Voice Marketplace overview (consent-based, performer-first licensing) ↩

- Vestbee — ElevenLabs funding profile & technical overview (multilingual speech synthesis) ↩

- GoAnswer — AI voice scams in 2025 and regulatory responses ↩

- European Parliament — EU AI Act and transparency requirements for AI-generated content ↩

- Reuters — Spain bill mandating labelling of AI-generated content (2025) ↩

- ElevenLabs — How to monetize your voice with the Voice Library (creator payouts) ↩

- ElevenLabs — CCPA notice describing Voice Data collection and consent verification ↩

- ElevenLabs — Prohibited Use Policy (updated Sept 2025) ↩

- ElevenLabs — AI-generated podcasts & legality of voice changers / impersonation ↩

- Regula — Deepfake and AI-manipulation regulations overview 2025 ↩

- RPC — EU code of practice on AI transparency under Article 50 AI Act ↩

- AP News — South Korea’s upcoming labelling rule for AI-generated ads (2026) ↩